The compound effect of AI tooling

Each piece I’ve written about — rules, skills, subagents, the portable file layout, the journaling integration, the standards wrapper — is small on its own. The interesting thing is what happens when they layer. The system stops feeling like a collection of tools and starts feeling like an environment shaped to fit me.

That shift is the compound effect. This is the essay version of that observation: not a tutorial, just an account of what changed while I was building this stuff and not paying attention to the cumulative effect.

The pieces, recapped briefly

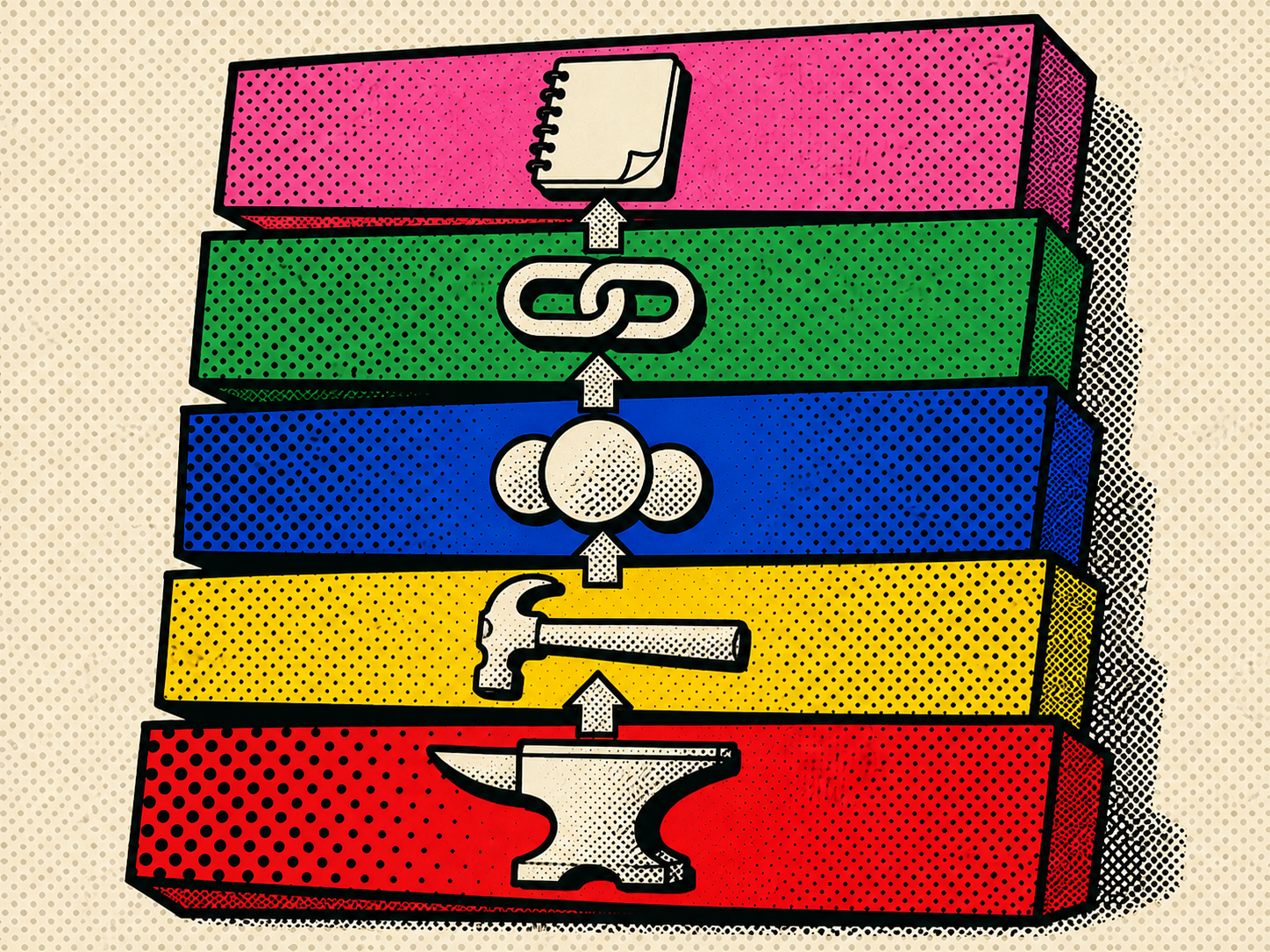

The earlier posts covered five pieces: rules that load project memory, skills that cache repeatable procedures, subagents that isolate specialized work, a portable layout that keeps the library tool-agnostic, and a journaling integration that closes the loop. Each one is small. The point is that they now work together.

Where the compounding starts

Each layer makes the layer above it cheaper.

Rules make skills better. When a skill runs through an agent that already has the right project rules loaded, the skill’s output is automatically conformant. I don’t have to put style guidance in the skill body; it’s already in the rule. The skill body gets shorter and more focused.

Skills make subagents better. When a subagent is invoked, it inherits the rules and can call skills from its isolated context. The security-review subagent doesn’t have to know how to query the database; it can call the relevant debug skill. Specialization stacks on top of reusable infrastructure instead of reinventing it.

The portable layout makes the rest tool-agnostic. None of the rules, skills, or subagents are coupled to a specific AI coding agent. Switching tools — or running multiple tools on the same project — doesn’t reset the work.

The journaling integration ties the loop closed. The decisions I make using rules, skills, and subagents are captured in journal entries. The journal entries become inputs to the next day’s plan. The plan informs which rules to write or which skills to add. The system produces evidence about itself, which I read, which informs my next move.

The dependency graph runs from rules at the bottom to journaling at the top. Each layer takes the cost-savings of the layer below and amplifies them. None of the layers, by itself, would be impressive. All of them together produce something I genuinely could not have built without them.

What the system feels like, after a few months

Some specifics about how this changes the day-to-day, that I would not have predicted at the start.

The blank-page problem mostly disappears. When I sit down to start a session, the agent already knows the project’s voice, conventions, structure, safety policies, and recent state. I’m not staring at an empty prompt trying to remember which framing to give it. The framing is preloaded.

Investigation is faster than implementation. When something is wrong, I can usually have a structured account of what’s wrong within a minute or two. Debug skills inspect live state; the journaling history surfaces context; the subagent reads policies if relevant. The agent assembles the answer faster than I could manually navigate to the relevant file.

I notice myself trusting the agent more — appropriately. When the agent generates a piece of code under the always-on behavioral rules and the security review subagent gives it a clean bill, I trust the output more than I would have without that scaffolding. Not because the agent has gotten smarter; because the system has gotten more trustworthy. The trust is in the audit, not in the original generation.

Reports write themselves. The Friday afternoon ritual that used to be three hours of writing is now thirty minutes. That two-and-a-half hours, every week, is freed. Some of it I use. Some of it just stops being on my plate.

The codebase stays cleaner. With the simplicity-first rule loaded, the surgical-changes rule loaded, the security-review subagent on call, and the journaling loop catching when I drift, the diffs that get committed are tighter than what I was producing before all of this existed. Quality went up; effort went down. That’s not a tradeoff; it’s a compound benefit.

Switching projects is faster. The vendor-agnostic layout means I can drop the same standards wrapper and the same skill library into a new project as a workspace folder, and within a few minutes the new project has the same scaffolding the old one did. Onboarding myself onto a fresh codebase takes far less mental effort than it used to.

Why it took layers

I want to be careful not to over-rotate on the success of the system, because the path to it was unglamorous.

The first version of any of these layers was always too elaborate or too thin. The first rule file I wrote was too long; nobody (including the agent) read it carefully. The first skill I wrote was too tightly coupled to its codebase; it broke the next time I refactored. The first subagent I tried to write tried to do everything; it was useless. The first journaling integration was scheduled and ran daily without me — I hated it within a week.

Each layer went through a few rewrites before settling. The current shapes — small rules, narrow skills, read-only subagents with structured output, manually-triggered journaling — are the shapes that survived contact with reality. They’re not the shapes I would have predicted at the start.

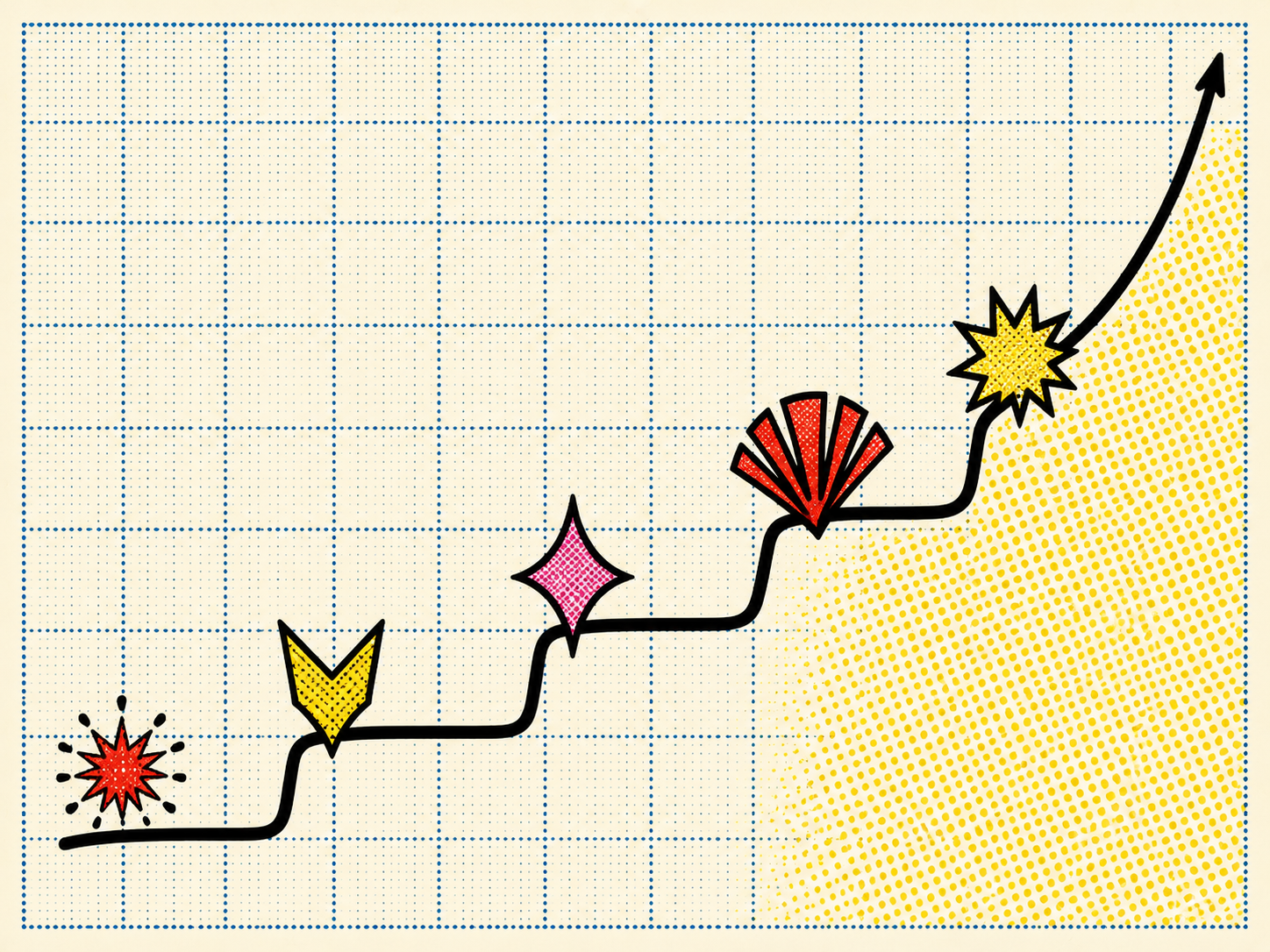

That’s worth saying out loud, because someone reading this series might think the obvious move is “build the same five layers I have, all at once”. It isn’t. The right move is to build one layer, use it for a while, notice where it falls short, and let the next layer emerge from the gap. Compound effects come from layering things that have each been pruned to fit. They don’t come from designing the whole stack on a whiteboard.

What this isn’t

This isn’t productivity, in any naive sense. The work I do isn’t faster than other engineers’. What’s different is that the operating overhead of being on top of a project — knowing its state, communicating about it, maintaining standards — has dropped dramatically. The work itself takes the time it takes.

This isn’t replacement. The agent is not doing my job. It’s removing the friction around my job. The judgment, the editorial choices, the architectural decisions, the conversations about priority — those are still on me.

A small philosophical observation

There’s a thing I’ve come to believe, watching this happen on my own work and in conversations with other people experimenting with similar setups.

We tend to think of AI tools as features added to an existing way of working. “Now my editor has autocomplete.” “Now my IDE has chat.” The model behind that framing is that the tool is a discrete addition to a stable workflow.

That’s not what’s actually happening, in the long run. What’s happening is that the surrounding workflow — the rules, skills, journaling, communication — is also reshaping itself around the tool’s strengths and weaknesses. The result is a co-adapted system. The human and the AI tool aren’t separate components; they’re parts of one workflow that has evolved to use both effectively.

The compound effect is what that co-adaptation looks like once it’s matured. It’s not the AI getting better. It’s the system getting better, of which the AI is one part. The other parts — the rules, the skills, the layout, the practices — were also shaped over time, and they’re doing as much of the work as the model is.

I think this matters for how to think about the next few years. People who treat AI tools as features they’re adopting in isolation will plateau. People who treat AI tools as something the workflow co-evolves with will keep compounding.

That’s the whole post. Five layers. Each small. The interesting thing is the stacking. The specific content is mine to write, but the pattern is portable: small layers, each pruned to fit, until the system feels like an environment instead of a toolset.